PwC's 29th Global CEO Survey covered 4,454 chief executives across 95 countries. One finding stood out: 56% report zero financial return from their AI investments.

Not disappointing return. Not below expectations. Zero. Deloitte's 2026 State of AI in the Enterprise survey of 3,200+ business leaders found the same gap from a different angle: only 25% of organizations have moved even 40% of their AI experiments into production.

This is not a technology problem. GPT-5.4, Claude, Gemini, Snowflake Cortex, and Microsoft Foundry are capable systems. The models work. The data underneath them does not.

Organizations spending on ai development services are discovering that the bottleneck is not the model or the vendor. It is the data. And an ongoing study by METR has struggled to even measure AI's impact on the productivity of experienced developers. Their latest findings show no statistically significant improvement, and the study itself is breaking down because developers now refuse to work without AI tools. The tools are becoming table stakes. The differentiator is what you build them on top of.

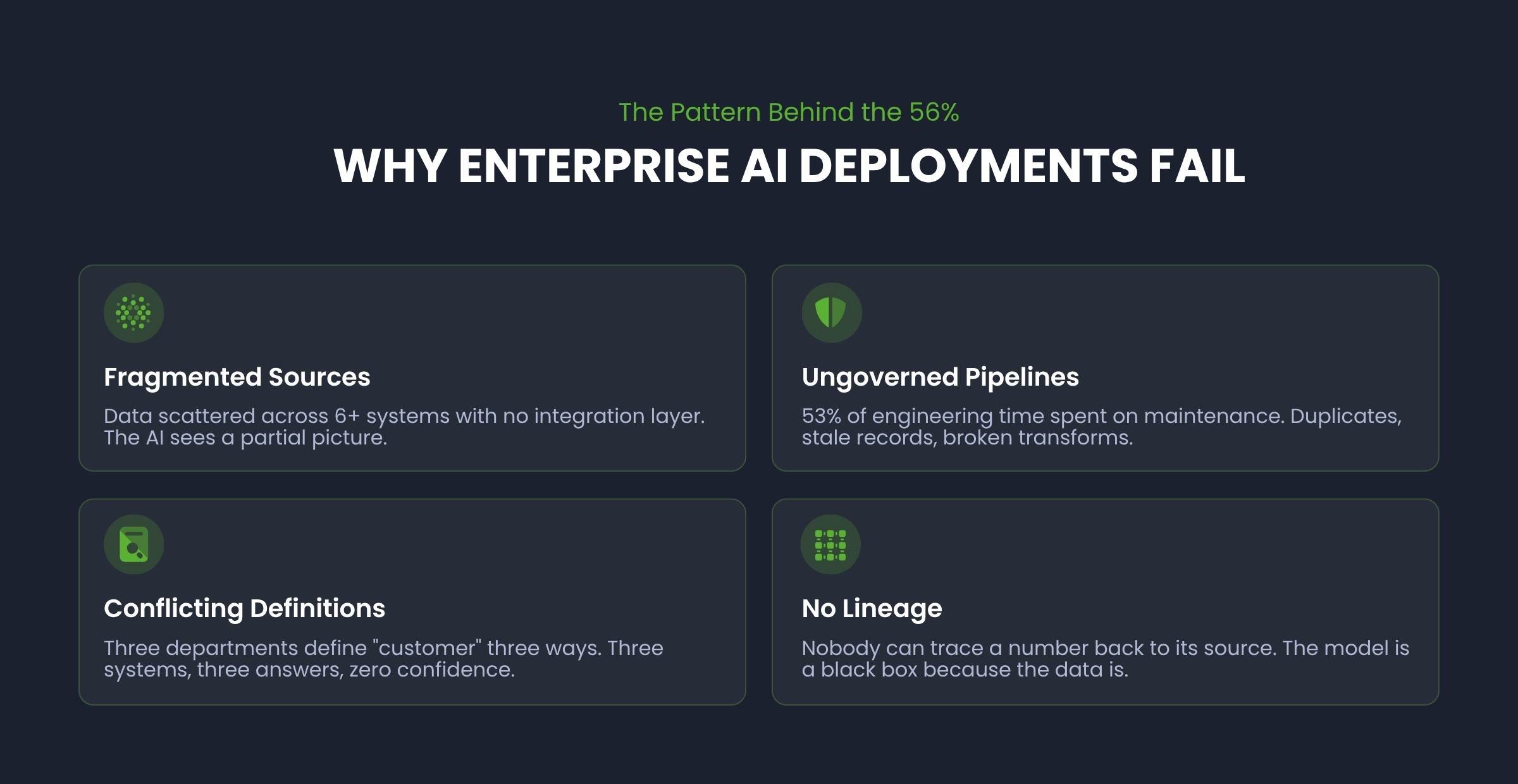

The Pattern Behind the 56%

The failure pattern is consistent regardless of industry or company size.

An enterprise decides to "do AI." Leadership approves a budget. A team selects a use case (usually document processing, customer analytics, or operational forecasting). They pick a vendor or build with an API. They deploy.

Nothing moves. No metric improves. The pilot just sits there.

The reason is almost always the same: the AI was deployed on top of data that was not ready to support it.

Fragmented sources. Customer data lives in Salesforce, operational data lives in an ERP, financial data lives in a legacy SQL Server warehouse. The AI model can only see what it is connected to. When data is scattered across six systems with no integration layer, the model operates on a partial picture.

Ungoverned pipelines. Data flows from source systems to analytics through ETL processes that nobody has audited in years. Duplicates, stale records, broken transforms. Without data governance, the pipeline delivers data, but whether that data is accurate is a separate question entirely.

Conflicting definitions. Marketing defines "customer" one way. Finance defines it another. Operations has a third definition. When an AI model asks "how many customers do we have," the answer depends on which system it queries. Three systems, three answers, zero confidence.

This fragmentation is playing out publicly. A recent thread on a developer forum described the exact pattern: an AI-first strategy that devolved into every team picking their own AI tool, each generating its own data outputs, with no coordination layer. The result was more AI activity and less organizational intelligence.

No lineage or documentation. Nobody can trace a number from the dashboard back to the source. When the AI produces an output that does not match expectations, there is no way to diagnose why. The model becomes a black box not because the algorithm is opaque, but because the data feeding it is.

Not sure if these patterns exist in your organization? A data readiness assessment can map your current environment and identify gaps in 2-3 weeks, before you commit to an AI vendor or use case.

What the Other 44% Did Differently

The companies generating returns from AI did not start with the AI. They started with the data foundation.

Anthropic's 2026 State of AI Agents Report confirms this from the other direction: 80% of organizations report measurable returns from AI agents, but the top barriers to scaling are integration challenges (46%) and data quality requirements (42%). The technology is delivering. The data underneath it is what determines whether it scales.

This does not mean they waited years to "get the data perfect." Perfection is not the goal. AI readiness is.

They mapped what they had before deciding what to build

Before selecting an AI use case, they assessed their actual data environment. Where does the data live? What is the quality? What is documented and what is tribal knowledge? Where are the gaps?

This is the step most enterprises skip. The assumption is that "we have data, so we can do AI." But having data and having data that supports a specific AI use case are two different things.

One healthcare organization we worked with took this approach. During the data assessment, they discovered that data quality issues in their claims pipeline were causing revenue leakage that had gone undetected for years. Fixing the data foundation before deploying any analytics recovered $16M in Medicaid revenue. The value was in the assessment itself, not in an AI model.

They built the integration layer first

The companies seeing returns connected their data sources into a governed, reliable platform before deploying AI on top of it. The specific platform (Snowflake, Databricks, Microsoft Fabric) matters less than the principle: a single governed dataset that the AI can actually rely on.

Without this layer, every AI project becomes a data engineering project with a model attached. Fivetran's 2026 Enterprise Data Infrastructure Benchmark found that 53% of engineering time goes to pipeline maintenance alone, and 97% of enterprises report disruptions to AI or analytics initiatives from data infrastructure gaps. When your engineers spend more time maintaining pipelines than building the AI the project was funded to deliver, the ROI math does not work.

They proved value on real data, not demo data

A proof of concept that runs on sample data proves nothing about production viability. The companies getting returns built their proofs of value on actual production data, including all its messiness, edge cases, and volume.

If the outcome holds against real data, it will hold in production. If it only works on a curated sample, it will fail the moment it meets the operational environment.

They planned for production from day one

A model running in a notebook is not a deployed AI system. Production AI requires monitoring, governance, retraining schedules, error handling, and a team that understands how to maintain it. The 44% planned for production from day one, not after a successful demo convinced someone to fund the next phase. As one healthcare AI leader put it in Deloitte's survey: "If there is no coherent AI strategy in organizations, you are likely to see pilot fatigue. Without a clear roadmap, executing a hundred pilots just leads to poor results and failed value creation."

These four practices sound straightforward. In our experience, the hard part is sequencing them correctly for a specific enterprise, with its specific systems, its specific data quality challenges, and its specific organizational constraints.

Our Approach: Workshop. Prove. Scale.

Through our AI consulting engagements, we see this pattern in every enterprise AI conversation. The interest in AI is genuine. The readiness to support it is rarely there.

We built the AI Catalyst Framework around three phases that address this reality. We call the approach Workshop. Prove. Scale.

Workshop

Map the actual data landscape. What systems exist? What is the quality? Where are the integration gaps? What AI use cases are realistic given the current state? This phase prevents the most common failure mode: choosing an AI use case that the data cannot support.

Our 360 AI Workshop is designed to deliver this first phase in two to three weeks, giving your leadership team a concrete readiness picture and a prioritized use case roadmap.

Prove

Build a proof of value against real production data. Not a slide deck with projected ROI. A working prototype that processes actual operational data and produces a measurable business outcome. If it works here, it works in production.

Scale

Operationalize what the proof validated. Production deployment with monitoring, governance, pipeline reliability, and the organizational structure to maintain it long-term.

For a pharmaceutical services company, the Scale phase meant mobilizing a team of 13 in under three weeks and building a managed data operation that now runs through 2027. Production AI requires production-grade teams.

This is not a platform you buy. There is no AI gateway product that solves data fragmentation. The work is specific to your environment, your systems, your data. That is why it requires people who understand enterprise data architecture, not a vendor login.

The Real Cost of Skipping the Data Foundation

The 56% of CEOs reporting zero return did not fail because they chose the wrong AI model or the wrong vendor. They failed because they treated AI as a technology purchase rather than a capability that requires a functioning data infrastructure beneath it.

The cost extends beyond the AI investment itself. It includes the opportunity cost: months or years spent deploying AI that could not deliver, internal credibility lost when the initiative produces no results, and organizational reluctance to try again. In manufacturing, we see this with predictive maintenance models deployed on fragmented MES data. In financial services, fraud detection models fail when transaction data is siloed across legacy core banking systems.

When a CEO tells PwC "we got zero return from AI," what they often mean is: "we spent money on a model that could not do what it was supposed to because the data was not there."

The fix is earlier in the pipeline than most companies think.

How to Evaluate AI Readiness Before You Invest

If your enterprise is evaluating AI, or if a previous AI initiative has underdelivered, the first question is not "which model should we use?" The first question is "what does our data look like?"

Be skeptical of vendors promising AI ROI in 120 days if they have not assessed your data first. A realistic timeline depends entirely on the state of your data foundation. For enterprises with governed, integrated data, initial value can emerge in weeks. For those starting from fragmented systems, the honest answer is that the data work comes first. Skipping it is how you end up in the 56%.

Before engaging ai development services, start with the data landscape assessment. Not the AI vendor selection. Not the use case prioritization. The data.

The 44% started there. The other 56% started with the technology and spent the next two years explaining why it had not delivered.

Not sure where your organization stands?

Our 360 AI Workshop maps your data landscape and identifies realistic AI use cases in 2-3 weeks

Frequently Asked Questions

Why do enterprise AI projects fail? Most enterprise AI projects fail because they are deployed on data that is not ready to support them. Fragmented sources, ungoverned pipelines, conflicting definitions, and missing documentation mean the AI model operates on incomplete or inaccurate information.

What is AI readiness? AI readiness means having governed, integrated, and documented data that can support specific AI use cases. It does not mean perfect data. It means data that is reliable enough for a model to produce trustworthy outputs in a production environment.

How much does enterprise AI implementation cost? Enterprise AI pilots typically range from $150K to $500K. When 60-80% of that budget goes to data wrangling rather than model development, the AI ROI math breaks before the project starts. A data readiness assessment ($15-25K) can prevent this.

What should companies do before investing in AI? Assess the data landscape first. Map source systems, evaluate data quality, identify integration gaps, and determine which AI use cases the current data can realistically support. This assessment is the starting point, not the vendor selection.

What is a data foundation for AI? A data foundation is a connected, governed set of data sources with quality controls, lineage tracking, and a reliable integration layer. It is the infrastructure that makes AI outputs trustworthy and repeatable in production.

Smart Data has spent two decades building data platforms and enterprise software for organizations in healthcare, manufacturing, financial services, and distribution. 230+ employees. Clients include Fortune 500 healthcare payers, national distributors, and mid-market manufacturers across the US.

This blog is part of our AI Enablement Guide, a comprehensive resource covering data maturity assessment, use cases by readiness level, industry perspectives, and the Workshop.Prove.Scale. framework.